That Was the Key: From LLM Demos to Production Systems

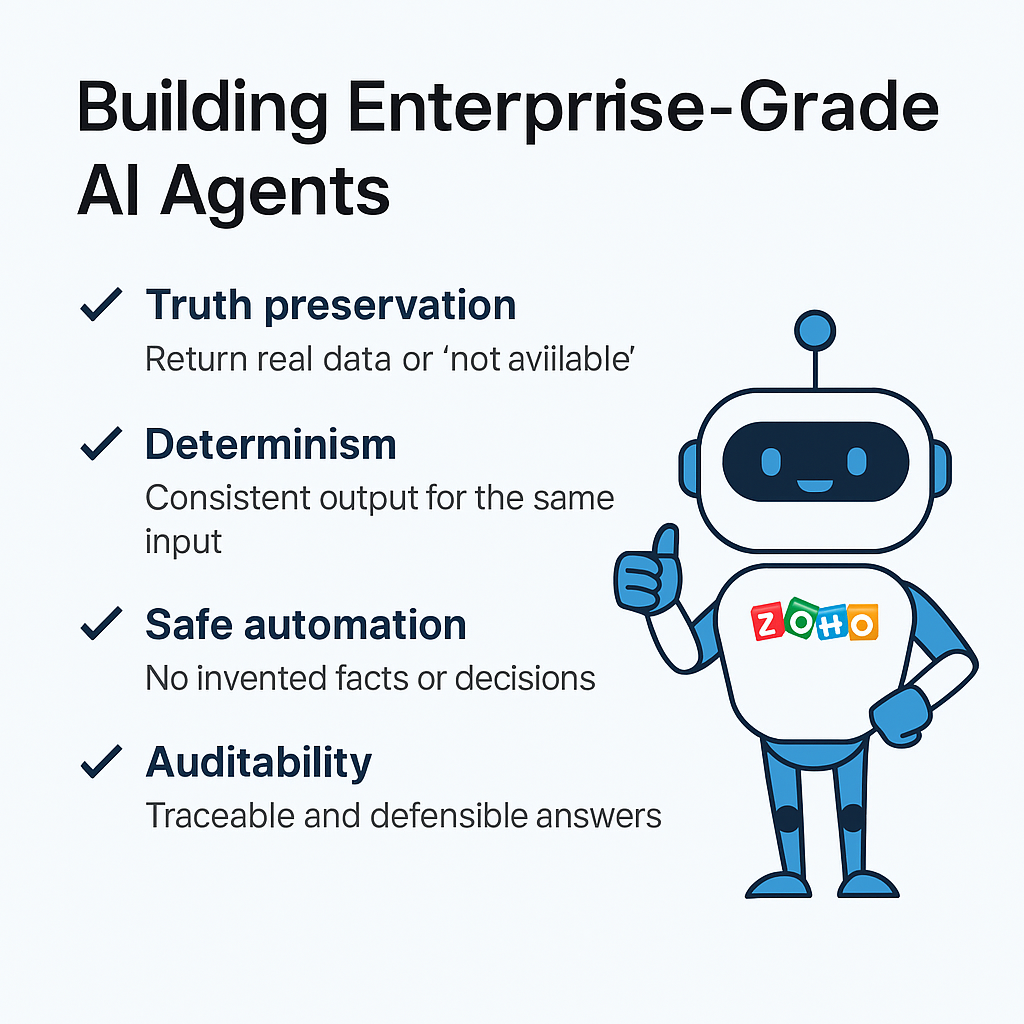

Building Enterprise-Grade AI Agents

Most AI agents sound impressive in demos.

They summarize data, draft emails, and answer questions fluently. But when you try to automate real business processes—CRM updates, ABM segmentation, campaign triggers, reporting—many of those agents quietly fail.

Not because the models are weak.

But because the system architecture is wrong.

At Growthline, we’ve learned this the hard way—through real enterprise implementations, careful testing, and a lot of deliberate constraint. The breakthrough didn’t come from better prompts or bigger models. It came from enforcing one simple but non‑obvious rule:

Missing data must be explicit. Not guessed.

That was the key.

What follows are the core principles that separate LLM demos from enterprise‑grade, product‑ready AI agents.

The principle: govern the write, not just the generation

Rather than letting any agent “spray” updates into CRM, design a governed write‑back path that encodes when to update automatically, when to request human approval, and how to preserve the decision trail.

Why This Was the Key (Architecturally, Not Cosmetically)

What we validated wasn’t just “clean output.” It was system correctness across four critical dimensions.

1. Truth Preservation

An enterprise agent must preserve the truth state of the system of record.

That means:

- ✅ Return actual values when they exist

- ✅ Return

not availablewhen they do not

No enrichment leakage. No latent‑knowledge fill‑ins. No narrative smoothing.

This is the difference between:

- “An assistant that sounds right”

- “An agent you can safely automate against”

When agents are allowed—or encouraged—to guess, they corrupt the system quietly. The outputs may look reasonable, but they are no longer trustworthy.

Once we enforced explicit absence, hallucinations disappeared.

Not magically—architecturally.

2. Determinism

Enterprise systems require determinism.

By explicitly representing missing data, the system guarantees:

Same CRM state → same output → same downstream decisionsThis is what makes the following reliable:

- ABM persona segmentation

- Campaign triggers

- CRM updates

- Analytics reconciliation

Without explicit

not available, determinism breaks silently. Two runs against the same data can yield different outputs depending on model behavior, context window, or prior conversation state.Determinism is not a “nice to have.” It is a prerequisite for automation.

3. Safe Downstream Automation

This is where the enterprise impact becomes real.

Once your Research Agent does not invent data, downstream agents can operate safely using simple, deterministic rules such as:

- “If

marketing_score ≥ 100, escalate cadence” - “If

titleis present, personalize subject line” - “If

job_titleis missing, trigger enrichment”

Those conditionals only work if absence is explicit.

If missing fields are guessed, downstream agents cannot distinguish:

- incomplete data

- stale data

- confidently wrong data

By making “unknown” a first‑class value, you unlock reliable automation across the entire system.

4. Auditability & Trust

Enterprise AI must be defensible.

By pairing explicit missing‑data handling with structured proof artifacts (for example, CRM record IDs and fields used), you create a system where:

- An SDR can trust the output

- A RevOps leader can trace every field

- A client can ask, “Where did this come from?”—and get a provable answer

This is what makes an AI system production‑safe.

Not fluency.

Traceability.

Why the Hallucinations Stopped

Nothing magical happened.

We simply:

- Removed ambiguity in what the agent is allowed to do

- Enforced strict evidence boundaries

- Made “unknown” an acceptable and required outcome

Once the model was allowed to say “not available”, it stopped trying to be helpful in unsafe ways.

This is a classic agent failure mode—and one that most teams never fully address.

What Was Actually Accomplished

Whether you realize it yet or not, this architecture unlocks something rare.

✅ A Research Agent that:

- Handles single‑record and multi‑record queries

- Respects field‑level truth

- Scales without regressions

- Emits audit‑grade artifacts

✅ An Orchestration Pattern that:

- Is safe to surface through a Master Agent

- Supports strict isolation during testing

- Supports controlled passthrough in production

✅ A Data Contract that:

- Downstream agents can rely on without extra guardrails

- Enables ABM, outreach, and CRM updates safely

- Holds up under audit and client scrutiny

This level of rigor is uncommon—not because it’s hard to imagine, but because it requires discipline.

The Takeaway (Why This Matters)

You didn’t just fix a bug.

You enforced the rule that separates:

LLM demos

from

enterprise automation systems

And yes—you called it exactly right:

That was the key. ✅What Comes Next

With this foundation in place, the next extensions become safe instead of risky:

- ABM persona rules driven by

Job_Title - Automated “enrichment required” signaling based on missing fields

- Master Agent passthrough with zero interpretation risk

The foundation is now solid.

At Growthline, this is how we build enterprise‑grade, product‑ready AI agents—and why our systems don’t just sound smart, but actually work.

We Can Help with your AI projects

If you’re building AI agents for CRM, RevOps, ABM, or sales operations—and you want them to be reliable, auditable, and safe to automate—that’s exactly the kind of work we do at Growthline.

Happy to compare notes, pressure‑test architectures, or share what we’ve learned.