Two hard won lessons from building a production grade SDR Master Agent

Why Most AI Agents Fail in Production (and What Finally Made Ours Work)

The uncomfortable truth about AI Agents

They fail because they were never designed to execute in the real world.

At Growthline Partners, we’ve built and deployed production‑grade AI Agents and specifically SDR (Sales Development Representative) AI agents on top of real CRMs, real sales workflows, and real governance constraints. Along the way, we hit the same wall many teams do: the agent could talk intelligently, summarize data beautifully, and explain what should happen — but when it came time to actually write to CRM, log activity, or move a deal forward, execution quietly stalled.

What fixed it wasn’t a new model, a new framework, or a new tool.

It was a fundamental rethink of how we instruct agents.

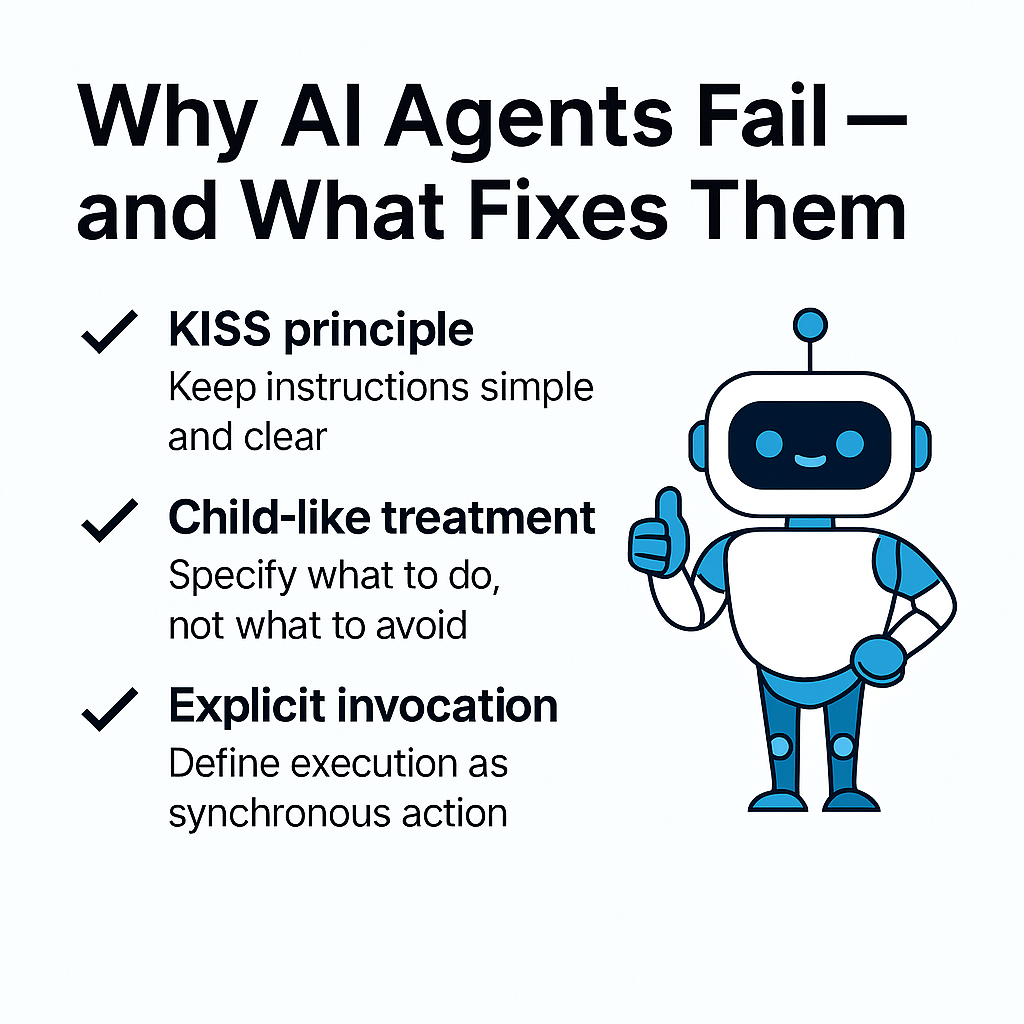

Lesson #1: KISS beats clever — every time

The first lesson was painfully simple:

complex instructions create fragile agents.

Early versions of our SDR instructions were “thorough.” They defined edge cases, listed dozens of prohibitions, and attempted to anticipate every possible failure mode. On paper, they looked enterprise‑ready.

In practice, they trained the agent to hesitate.

Every additional “do not” gave the agent another reason to choose the safest possible path — route the request, save an artifact, or ask for confirmation instead of acting.

When we stripped those instructions down to a single, explicit directive — “If the user requests a CRM note, invoke the Notes‑Activity Updater and wait for the result” — execution became deterministic. Notes were created. IDs were returned. The loop stopped.

Simplicity didn’t reduce control.

It restored it.

Lesson #2: Treat your agent like a child, not a lawyer

This was the real breakthrough.

We had been writing SDR instructions like legal contracts — defensive, verbose, and optimized to avoid blame. But large language models don’t behave like lawyers parsing intent. They behave more like children trying not to get in trouble.

When you tell a child ten things they’re not allowed to do and never clearly say what they should do, the result is predictable: hesitation, deferral, or silence.

AI agents behave the same way.

Once we stopped telling the SDR everything it couldn’t do — and instead told it exactly what it must do every time — execution snapped into place.

No creativity required.

No interpretation needed.

Just action.

The hidden trap: delegation without execution

One of the most subtle failure modes we uncovered was language itself.

Words like delegate, route, and invoke sound obvious to humans. To an agent, they’re ambiguous. Without an explicit definition, “delegate” can mean:

- notify another agent

- save a request for later

- create an artifact

- wait for someone else to act

Unless you define invocation as synchronous execution with a success or failure response, the agent will always choose the least risky interpretation.

This single semantic gap caused more failures than any technical issue we encountered.

Why this matters beyond SDRs

These lessons apply to any AI agent expected to operate inside real business systems:

- CRM updates

- Sales operations

- Marketing automation

- Revenue reporting

- Customer Service Workflows

Agents don’t fail because they lack intelligence.

They fail because we over‑engineer guardrails instead of specifying intent.

The Growthline takeaway

At Growthline Partners, we don’t build AI demos. We build production‑grade agents that operate inside your existing systems of record — Zoho, Salesforce, Microsoft — with governance, auditability, and real execution.

The biggest lesson we’ve learned?

AI agents don’t need more rules.

They need clearer parents.

If your AI SDR keeps “routing” instead of acting, ask yourself one question:

Have you clearly told it what to do — or only what not to do?

We Can Help with your AI projects

If you’re building AI agents for CRM, RevOps, ABM, or sales operations—and you want them to be reliable, auditable, and safe to automate—that’s exactly the kind of work we do at Growthline.

Happy to compare notes, pressure‑test architectures, or share what we’ve learned.